Table of Contents

Learning Objectives

By the end of this lesson, you will be able to:

- Define the three primary subagent roles in a Claude multi-agent system

- Explain the responsibilities and constraints of each role

- Identify which role is appropriate for a given task description

- Describe how roles interact within a system and how system prompts differ per role

Subagent Roles: Orchestrators, Workers, and Validators

Role Definition in a Multi-Agent System

In a multi-agent system, each agent has a defined role that shapes its system prompt, its tool access, and the context it receives. Role definition is not a labelling convention — it is a design constraint. An agent’s role determines what it knows about the system, what it is permitted to do, and what it is expected to return.

When roles are defined clearly and enforced through system prompt design and tool access controls, the system behaves predictably and failures are attributable. When roles blur — when a worker begins making coordination decisions, or when an orchestrator attempts to execute subtasks directly — the system produces behaviour that is difficult to reason about and harder to debug.

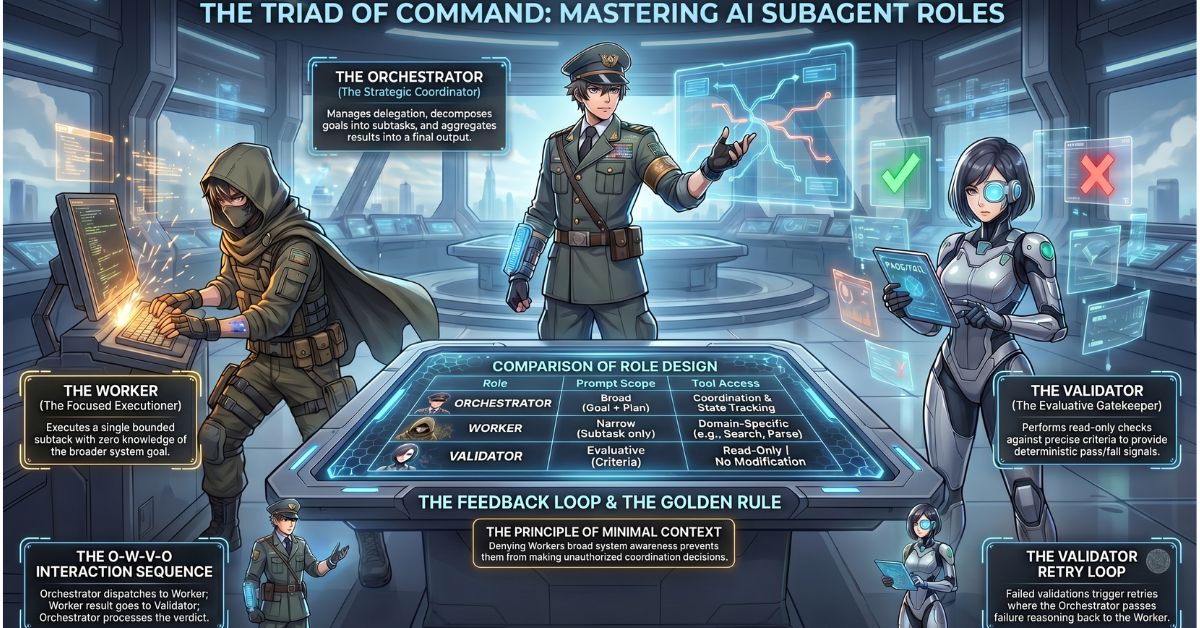

The three primary roles in a Claude multi-agent system are the orchestrator, the worker, and the validator.

The Orchestrator Role

The orchestrator receives the overall goal and takes responsibility for achieving it through delegation. It does not execute subtasks itself. Its function is coordination: decompose the goal into subtasks, dispatch each subtask to the appropriate worker, collect the results, and assemble them into a coherent output.

The orchestrator maintains the broadest view of any agent in the system. It holds the original instruction, the decomposition plan, the state of each delegated subtask, and the aggregation logic that combines worker outputs into a final result. It also carries the error-handling responsibility — when a worker returns a failure signal or an output that does not meet expectations, the orchestrator decides whether to retry, invoke a fallback, or escalate.

Because the orchestrator manages the workflow rather than executing it, its system prompt reflects that breadth. An orchestrator system prompt typically includes the overall task objective, a description of the available workers and what each one handles, the delegation format the orchestrator must use, the aggregation logic it must apply to worker outputs, and the error-handling rules it must follow when a worker fails. The orchestrator’s tool access is oriented toward coordination — logging, state tracking, and worker invocation — rather than domain execution.

The Worker Role

The worker receives a specific, bounded subtask from the orchestrator and executes it. It has no knowledge of the broader system goal, the other workers operating in the same pipeline, or the aggregation logic the orchestrator will apply to its output. It knows only what it has been asked to do and what tools it has available to do it.

This intentional narrowness is a feature, not a limitation. A worker that operates without awareness of the broader system cannot exceed its scope inadvertently. It receives input, applies its tools, and returns a structured result. That result is formatted according to the output specification its system prompt defines — not according to the worker’s own judgement about what the orchestrator might find useful.

A worker’s system prompt is narrow by design. It specifies the exact subtask the worker is responsible for, the input format it should expect, the output format it must return, the tools it has access to, and any constraints on its behaviour. It does not include the overall task objective, the names of other workers, or any instruction that would lead the worker to reason about its role in the broader pipeline.

Worker tool access is bounded to the minimum required for its assigned subtask. A research worker has access to search and fetch tools. An extraction worker has access to parsing and structuring tools. A formatting worker has access to template and rendering tools. Granting a worker access to tools outside its domain introduces both security risk and the possibility that the worker will use those tools in ways the orchestrator did not anticipate.

The Validator Role

The validator receives the output of a worker and checks it against a defined set of criteria. It does not re-execute the subtask. It evaluates the result the worker has already produced and returns one of two outcomes: pass, meaning the output meets the required standard and can be passed to the orchestrator for aggregation; or fail, meaning the output does not meet the standard, along with a structured explanation of why it failed.

When a validator returns a failure, the orchestrator receives that failure signal and decides how to respond. In most well-designed systems, a validator failure triggers a retry loop: the orchestrator re-dispatches the subtask to the worker, optionally including the validator’s failure reasoning as additional context so the worker can correct its approach. The retry loop continues until the validator passes the output or a maximum retry threshold is reached, at which point the orchestrator escalates or degrades gracefully.

The validator’s system prompt defines the evaluation criteria precisely. Vague criteria — “check that the output is correct” — produce inconsistent validation behaviour. Precise criteria — “verify that the output contains exactly four fields, that the date field conforms to ISO 8601 format, and that the confidence score falls between 0 and 1” — produce deterministic pass/fail decisions that the orchestrator can act on reliably.

Validator tool access is typically read-only. A validator that can modify the worker’s output is no longer validating — it is editing, which conflates two distinct responsibilities and obscures whether the worker’s original output was acceptable.

How the Roles Interact

The interaction pattern between roles follows a defined sequence. The orchestrator dispatches a subtask to a worker. The worker executes the subtask and returns a structured result. The orchestrator passes that result to a validator. The validator evaluates the result and returns a pass or fail signal. If the validator passes the result, the orchestrator accepts it for aggregation. If the validator fails the result, the orchestrator retries the worker or invokes a fallback.

This sequence — orchestrator → worker → validator → orchestrator — creates a feedback loop that catches errors before they propagate into the aggregated output. Not every system requires a validator at every step. Validators add latency and consume additional context window resources. They are most valuable at the boundaries between high-stakes subtasks — where a flawed output would be difficult to detect downstream and costly to correct.

System Prompt Design Per Role

The system prompt is the primary mechanism through which a role is enforced. An agent behaves according to its role because its system prompt defines that role explicitly and provides no instruction that would lead it outside that role’s boundaries.

An orchestrator system prompt is broad. It describes the overall objective, the available workers, the delegation protocol, the aggregation logic, and the error-handling rules. It does not include the detailed execution instructions that belong in a worker’s system prompt.

A worker system prompt is narrow. It describes the specific subtask, the expected input, the required output format, and the permitted tools. It does not include the overall objective, information about other workers, or any instruction that would lead the worker to reason about coordination.

A validator system prompt is evaluative. It describes the criteria against which the worker’s output will be assessed, the format for the pass/fail signal, and the level of detail required in the failure reasoning. It does not include execution instructions or access to tools that would allow it to modify the output it is evaluating.

The Most Common Role Design Mistake

The most common mistake in multi-agent role design is giving a worker agent too broad a scope. A worker that receives the overall task objective — rather than a specific bounded subtask — begins reasoning about how to achieve that objective. It starts making decisions that belong to the orchestrator: which tools to invoke in what order, how to structure its output for downstream consumption, whether to attempt additional research beyond what it was asked to do.

This behaviour produces results that are inconsistent across runs, difficult to attribute to a specific design decision, and resistant to debugging. The worker is no longer executing a defined subtask — it is improvising an orchestration strategy it was not designed or tested for.

The corrective principle is minimal context: each agent receives the minimum context and tools required for its specific role, and nothing more. This principle applies to all three roles, but its violation is most consequential in worker agents because workers are the execution layer — errors at the execution layer propagate directly into the outputs the orchestrator aggregates.

Key Takeaways

- The three primary subagent roles — orchestrator, worker, and validator — each carry distinct responsibilities, operate with distinct levels of system awareness, and require distinctly scoped system prompts and tool access.

- The orchestrator coordinates and aggregates; the worker executes a single bounded subtask; the validator checks the worker’s output against defined criteria and triggers retries when the output fails.

- The interaction sequence — orchestrator → worker → validator → orchestrator — creates a structured feedback loop that isolates and corrects errors before they reach the aggregated output.

- Each agent must receive the minimum context and tools required for its role; workers given orchestrator-level context begin making coordination decisions they were not designed for, producing inconsistent and hard-to-debug behaviour.

What Is Tested

Exam questions on subagent roles describe an agent’s behaviour in a scenario and ask candidates to identify which role that agent is performing — for example, distinguishing between a worker that has exceeded its scope and begun behaving as an orchestrator, or identifying a validator that is modifying outputs rather than evaluating them. A second category of question presents a multi-agent system design and asks candidates to identify which role is missing — for example, a pipeline that delegates to workers and aggregates results but contains no validation step — or to identify a role that has been incorrectly implemented based on a description of its system prompt or tool access configuration.