Table of Contents

Learning Objectives

By the end of this lesson, you will be able to:

- Identify the key failure modes in agentic Claude systems

- Describe five reliability patterns that mitigate these failures

- Explain safety considerations specific to autonomous agent operation

- Apply these patterns to a production deployment scenario

Safety and Reliability Patterns in Agentic Deployment

Why Reliability Is Harder in Agentic Systems

A single-turn Claude interaction has a bounded failure surface. If the response is incorrect, the failure is visible immediately and contained within that exchange. Agentic systems do not have this property. An agent that executes a multi-step workflow interacts with external systems — APIs, databases, file systems — and makes decisions across multiple iterations. Errors introduced early in the loop propagate through subsequent steps, often without producing an obvious signal that something has gone wrong.

This compounding dynamic means that a small reasoning error in the third iteration of a ten-step workflow may only become apparent in the final output — at which point the agent has already made several tool calls, potentially written to external systems, and consumed significant context window space. Debugging that failure requires reconstructing the full execution trace, not just examining the last response.

Reliability engineering in agentic systems is therefore a design discipline, not an afterthought. The patterns covered in this lesson address the specific failure modes that recur in production agentic deployments.

Key Failure Modes

Tool call errors occur when an external system rejects or fails to respond to a tool call. The cause may be transient — a network timeout, a temporary rate limit — or structural — a malformed request, an authentication failure, a change in the external API’s schema. Claude does not automatically distinguish between transient and structural failures. Without explicit error-handling logic, it may retry indefinitely, escalate incorrectly, or proceed as though the tool call succeeded.

Context loss mid-loop occurs when Claude loses track of the task objective or prior results across iterations. This is most common in long-running workflows where the context window fills progressively with tool results, intermediate outputs, and conversation history. As earlier content is displaced, Claude may begin to contradict prior decisions, re-execute steps it has already completed, or lose the constraints specified in the original system prompt.

Infinite loops occur when an agent retries a failing step without a defined stopping condition. If Claude is instructed to retry a tool call until it succeeds, and the tool call is structurally broken rather than transiently failing, the agent will retry indefinitely — consuming resources, accumulating context, and never reaching a terminal state.

Hallucinated tool parameters occur when Claude constructs a tool call using parameter values that are not supported by the tool’s schema — inventing field names, fabricating identifier values, or supplying data in an unsupported format. These errors are particularly difficult to detect because the tool call may partially succeed, returning a result that appears plausible but is based on incorrect inputs.

Cascading failures in multi-agent systems occur when one worker’s failure propagates to the orchestrator and corrupts downstream results. If the orchestrator passes a failed or incomplete worker output to the next pipeline stage without validation, the downstream agent reasons from corrupted input. The error compounds, and the final output may be incorrect in ways that are difficult to trace to the original source.

Reliability Pattern 1: Retry with Backoff

When a tool call fails, the first response should not be immediate retry or immediate escalation. Many tool call failures are transient — the external system is temporarily unavailable or rate-limited. Retrying after a short delay resolves these failures without escalation.

Retry with exponential backoff means each successive retry waits longer than the previous one: first retry after two seconds, second after four, third after eight, and so on up to a defined maximum. This approach reduces load on the external system during recovery and prevents the agent from hammering a struggling service.

The retry logic must include a maximum retry count. Once that count is exhausted, the agent escalates — returning a structured failure signal to the orchestrator rather than continuing to retry. The escalation path must be defined in the system prompt; an agent that has no escalation instruction will attempt to resolve the failure through improvisation, producing unpredictable behaviour.

Reliability Pattern 2: Validator Checkpoints

A validator checkpoint inserts a validator agent between a worker’s output and the next stage of the pipeline. The validator evaluates the output against defined criteria — format compliance, completeness, value range constraints, logical consistency — before the orchestrator accepts it for aggregation or passes it downstream.

Validator checkpoints catch errors at the point of production rather than at the point of consumption. A worker output that fails validation triggers a retry loop: the orchestrator re-dispatches the subtask to the worker, optionally including the validator’s failure reasoning as additional context. This loop continues until the output passes validation or a maximum retry threshold is reached.

Without validator checkpoints, errors propagate silently through the pipeline. The downstream agent receives a flawed input, reasons from it, and produces a flawed output — compounding the original error in ways that the final output may not make apparent.

Reliability Pattern 3: Maximum Iteration Limits

Every agentic loop must have a defined maximum iteration count. This cap prevents infinite loops by ensuring the agent reaches a terminal state — either task completion or structured failure — within a bounded number of steps.

The maximum iteration limit must be accompanied by a defined behaviour for when the limit is reached. Acceptable terminal behaviours include returning a partial result with a flag indicating incompleteness, returning a structured failure signal to the orchestrator, or escalating to a human checkpoint. An agent that reaches its iteration limit and has no defined terminal behaviour will attempt to resolve the situation through improvisation, which introduces exactly the unpredictability the limit was designed to prevent.

The appropriate iteration limit depends on the task. A research workflow that legitimately requires many tool calls should have a higher limit than a single-step extraction task. Setting the limit too low produces false failures; setting it too high delays the detection of genuine infinite loops.

Reliability Pattern 4: Human-in-the-Loop Checkpoints

For high-stakes or irreversible actions — deleting records, sending communications, executing financial transactions, modifying production systems — the agent must pause and require human approval before proceeding. This checkpoint interrupts the autonomous loop and introduces a human decision point at the moment of highest consequence.

Human-in-the-loop checkpoints are not a fallback for unreliable agents. They are a deliberate design feature for actions whose consequences cannot be undone if the agent’s reasoning turns out to be incorrect. No reliability pattern eliminates the possibility of a reasoning error; human checkpoints ensure that the most consequential actions remain under human authority regardless of the agent’s confidence.

The checkpoint mechanism must be defined in the system prompt. The agent must know when to pause, what information to present for human review, and how to proceed once approval is granted or denied. An agent that is not instructed to pause will not pause.

Reliability Pattern 5: Structured Inter-Agent Communication

Agents in a multi-agent system should pass structured data — specifically JSON — rather than free-form natural language. When an agent passes free text to another agent, the receiving agent must parse and interpret that text before it can act on it. Parsing introduces ambiguity: the receiving agent may extract incorrect values, miss fields, or impose its own interpretation on loosely formatted content.

JSON communication defines the handoff contract explicitly. The sending agent knows the exact fields it must populate. The receiving agent knows the exact fields it will receive. Validation of the handoff is mechanical — a JSON schema check — rather than interpretive. Errors in inter-agent communication are detectable at the handoff boundary rather than downstream in the receiving agent’s reasoning.

Structured communication also makes inter-agent handoffs auditable. A log of JSON payloads passed between agents produces a complete, machine-readable record of what each agent sent and received — a property that free-text communication cannot provide.

Safety Consideration: Minimal Permissions

Each agent should have access only to the tools required for its specific role. A research agent does not need write access to a database. A formatting agent does not need access to an email dispatch tool. Granting agents broad tool access introduces two risks: the agent may invoke a tool it was not designed to use, producing unintended side effects; and a compromised or malfunctioning agent has a larger blast radius.

Minimal permissions is the agentic equivalent of the principle of least privilege in security engineering. It constrains the potential impact of agent errors and makes the system’s behaviour easier to reason about, because each agent’s possible actions are bounded by its tool access.

Safety Consideration: Audit Logging

Every tool call an agent makes — and the result that tool call returns — should be logged in a retrievable, structured format. Audit logs serve two purposes: post-hoc debugging, where a developer reconstructs the execution trace to identify where a failure originated; and compliance, where an organisation must demonstrate what actions its automated systems took and on what basis.

Audit logging is not optional in enterprise or compliance-sensitive deployments. It is a baseline requirement. Systems that do not log tool calls and results cannot be meaningfully audited, and failures in those systems are significantly harder to diagnose.

Anthropic’s Responsible AI Guidelines as a Design Constraint

Anthropic publishes responsible AI guidelines that apply as design constraints to production agentic systems built on Claude. These guidelines address how Claude should handle uncertainty, how it should behave when asked to take irreversible actions, and how it should respond when its instructions conflict with user safety or broader ethical considerations.

Architects designing production agentic systems on Claude should treat these guidelines as architectural inputs — not as policy documents to be reviewed once and set aside. A system designed without reference to these guidelines may produce behaviour that conflicts with them, resulting in unexpected refusals, degraded performance, or outputs that Anthropic’s safety mechanisms flag at runtime.

Key Takeaways

- Agentic systems compound errors across steps and interact with real external systems, making reliability engineering a core architectural discipline rather than an operational concern.

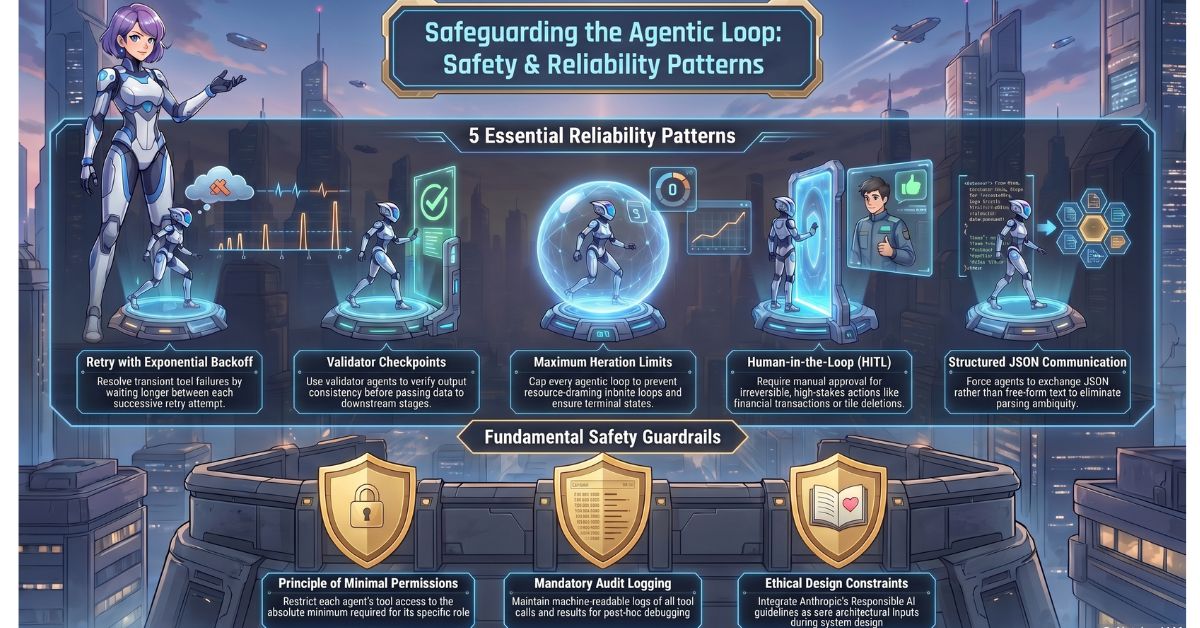

- The five reliability patterns — retry with backoff, validator checkpoints, maximum iteration limits, human-in-the-loop checkpoints, and structured inter-agent communication — each address a distinct failure mode and should be selected based on the failure risk profile of the specific workflow.

- Minimal permissions constrain the blast radius of agent errors and malfunctions; each agent should have access only to the tools its role requires.

- Audit logging is a baseline requirement for enterprise and compliance-sensitive deployments; systems that do not log tool calls and results cannot be meaningfully debugged or audited.

- Anthropic’s responsible AI guidelines function as architectural design constraints for production agentic systems and should be incorporated during system design rather than evaluated after deployment.

What Is Tested

Exam questions on safety and reliability patterns present a system description that contains a specific reliability gap — an agentic loop with no iteration cap, a multi-agent pipeline with no validator between worker output and the next stage, an agent with write access to systems outside its role — and ask candidates to identify the appropriate pattern that addresses that gap. A second category of question describes a failure mode and asks candidates to select which reliability pattern would have prevented it, requiring candidates to map failure modes to patterns precisely rather than select by elimination. Candidates are expected to understand both what each pattern does and which failure mode it is specifically designed to mitigate.